The Convergence of

Science and Economy

The future of humanity is

overwhelmingly interesting

Overwhelming innovation, amazing ideas, and

even funny humor, interesting things are born

from the intersection of different elements.

Araya will integrate cutting-edge AI and neurotech into business and daily life.

We aim to create an overwhelmingly exciting future for workers,

researchers, and all people in their daily life.

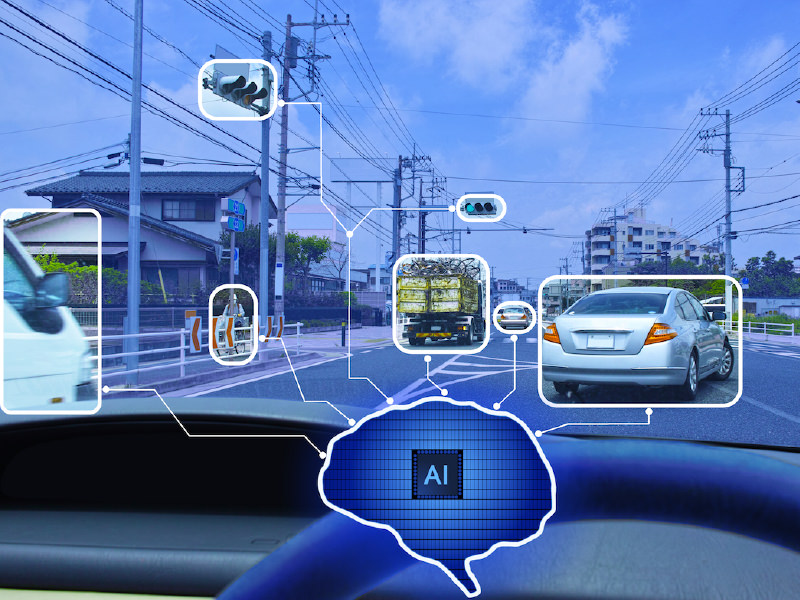

BUSINESS

All things AI

In today’s world, where new technologies

emerge and are

shared daily, Araya unlocks the potential of cutting-edge AI

across various

aspects of business to create new value. Araya’s

AI work generates innovative solutions

that bridge the gap

between research

and society.

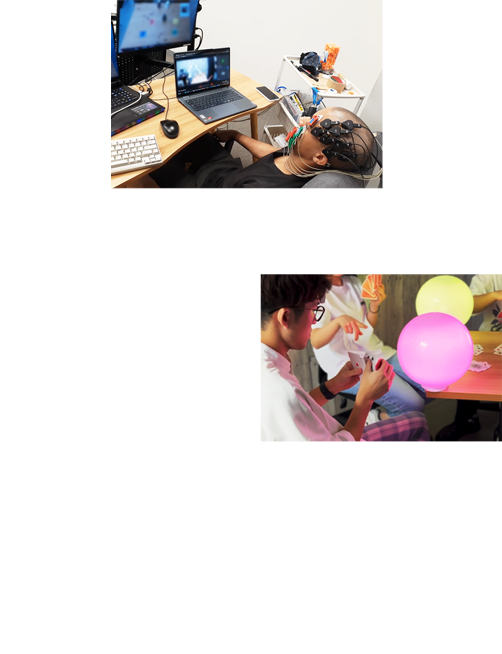

RESEARCH

Brain x AI for Humanity

- A New Step Forward

How does consciousness arise? How can we develop Brain-Machine

Interfaces (BMI) that connect humans and AIs? Through extensive

research, ranging from fundamental to applied studies, we strive to

enhance people’s lives by integrating our findings from both

academic and practical domains

PROJECTS

Introduction to AI implementation,

development, and utilization cases

AI活用事例

インフラ点検における画像認識AI活用

AI活用事例

フォークリフトなどの車両と人の接触防止への画像認識AI活用

AI開発事例

商業施設・店舗におけるインストアマーケティング・顧客行動分析への画像認識AI活用

AI活用事例

自動車メーカー向けエッジAI開発におけるOFA(Once-for-All)適用

AI開発事例

AI/強化学習により空調の最適化を行い、快適性を維持しながら大幅な消費電力の削減を目指します

COMPANY

With AI and Neurotech,

the future of humanity

is overwhelmingly

interesting.

Backed by our expertise and proven advanced AI solutions, and with reliable neurotechnologies developed by our researchers, we will lead the way to an overwhelmingly interesting future.

At Araya, we are looking for passionate and driven individuals to join our team.

Contact us

Contact us